Back quite a few months ago we started having an issue where our Cisco IP phones would randomly de-register from our CUCM call manager (CM) servers. This affected nearly 200 phones in five locations, with seemingly no pattern to explain their behavior. Interestingly, in-process calls were not dropped; the phones would only de-register when idle, leaving their primary call manager server and failing over to their secondary.

As seems typical of the subjects of many of my blog posts, searching online turned up many links to similar issues – however they were all dead ends. Either enough was different in our network that those links didn’t apply, or we were able to quickly eliminate the potential causes suggested by those other articles.

Before I go any further…fair warning, this is going to be a long post. It’s summarizing months of troubleshooting; that by itself would make it lengthy. But beyond that, I prefer to write these posts in narrative style because this blog is as much about the process of troubleshooting as it is the technical issues themselves. Nonetheless there should be some useful technical info here for anyone troubleshooting a similar problem, even if your symptoms or ultimate cause are not completely identical.

One more note: We have a fairly large L2 network that’s Cisco end to end, with on average three L2 “hops” between our furthest switches and our core. There are a few instances where it may be as much as five switches in a given path. While we of course add or replace switches from time to time, it’s a fairly steady-state network, and most importantly it’s been reliable. We’ve been running IP phones for five-plus years, and been on our current version of call manager (8.6) for roughly two years with no issues that we were aware of. We have IP video surveillance and a large number of Cisco Meraki MR34 access points as well, all of which has worked fine.

Back to our situation…

Of course the first step in troubleshooting a new problem should be to figure out what changed. In our case: nothing. We had previously replaced our core switch, upgrading from a 4507R Sup IV to a 4510R+E Sup 8E, but that happened about six months prior and thus seemed unlikely as a possible cause since the timing didn’t match up.

Next, one would typically look at the commonalities between problem areas. We were seeing the problem on different model phones, connected to different model switches, different distances away from the core, on different WAN links, etc. There was no pattern to time, no pattern to network load, and basically nothing we could establish as common, other than our core switch and our call manager servers. We did have two locations without problems, but we could not see anything particularly unique to their configuration to explain that.

Since it seemed less likely that the core switch suddenly started acting up, we opened a TAC case with the voice team. They gave a frustratingly superficial review of the servers (physical Cisco MCS boxes) and advised us to upgrade the firmware on our phones. I saw no reason to do that since an older firmware doesn’t all of a sudden stop working, and they could not cite a specific bug related to our problem that was fixed in a later firmware. I don’t believe in changing firmware (or a driver) that has been working fine without a very good reason to do so; you’re just as likely to introduce new bugs as fix old ones.

One helpful thing they had us do was to install the Cisco Real-Time Monitoring Tool, or RTMT. (I’m not including a link as it seems to vary by Call Manager version.) It’s worth noting the “real-time” monitoring tool is not, in fact, real time. It’s close enough though for our purposes. Essentially the RTMT shows various Call Manager events; in our case we were specifically looking for phones that unregistered. Once again we could find no consistent pattern between phones, but what the RTMT did show – and this was important – was that most of the time, when one phone unregistered, others did as well. Additionally, when a group of phones deregistered they would often be from all across the network; this made it highly unlikely to be something specific to a particular switch or WAN link; instead whatever was happening appeared to be network-wide.

This and some other investigation led Cisco to conclude everything was fine on the voice side and there was an issue with the network. While they ultimately turned out to be correct, they seemed to come to this conclusion because of a lack of evidence that it was a voice problem rather than any specific evidence that it was something else. Call Manager – at least on version 8.6 – seems to be a bit of a black-box; it’s not easy to get much troubleshooting data out of it.

We next opened a ticket with the switching team, but in the meantime we ran some packet captures of our own to try and understand the phone registration and deregistration process better.

We already knew from the voice team that the 3rd gen phones we use – mostly 7962s and 7965s – send a keepalive every 30 seconds to their primary call manager server and every 60 second to their secondary. The voice team contended these keepalives were being missed and that was causing the phone to deregister. Our packet captures confirmed that – mostly. What we saw was that once the keepalive was sent by the call manager, there was only a two second window for it to be received. If anything happened to delay that keepalive more than two seconds, the phone would immediately disconnect from its primary server and connect to its secondary. It would then almost immediately connect back to the primary (since it was, in fact, available) since we had our phones set to fall-back.

TAC level one switching wasn’t much help, and since we were at a standstill with the call manager team, I asked my Cisco VAR to escalate the case. This got my local sales rep and sales engineer (SE) involved, and got the case itself – which had by now been going on for a few weeks – moved to the high level technical groups for voice and switching. This began a semi-regular series of calls with our SE, the voice team, and the switching team to try and narrow down the cause. Switching was the primary point of contact since things still pointed towards a network issue, but once we got the right people involved at voice, to their credit, they were always involved in the calls.

Everyone agreed the next step was to try and do an end to end packet capture and catch a phone deregistering so we could see where along the path packets were being lost. Only problem with that is we had random phones disconnecting at random times; capturing traffic for a specific random event simultaneously at four or five locations along a given path wouldn’t be easy. We nonetheless had no choice but to try.

The first thing we did was to enable “Span to PC port” within Call Manager. This forwards traffic intended for a phone to the PC connected to that phone, allowing Wireshark to be used to capture that phone’s traffic. We did this in five or six locations. Everywhere else along the path we had to configure span sessions on our switches and setup laptops with Wireshark logging to a circular buffer. It took a few days but we eventually got lucky and captured a phone deregistering; five large packet captures were then sent off to Cisco for analysis. (Incidentally, IOS-XE and apparently newer versions of IOS have the built-in ability to capture packets to Wireshark pcap format. This is extremely useful and saves having to connect an external device to a span session.)

The packet captures were our first real break. They showed it wasn’t the keepalives per-se that were being missed. Instead the TCP session between the phone and call manager was being torn down because of missed TCP acknowledgements. What seemed to happen is packets would get lost and – as a lost TCP packet should be – retransmitted, but all except the last of those retransmissions was also lost. By time the final retransmission was received the phone had disconnected. This meant we had a more fundamental TCP problem, not specifically related to the phone / Call Manager exchange.

At this point troubleshooting moved towards trying to determine where packets were being lost. Voice quality itself was fine and as noted earlier, in-process calls were not dropped, and so substantial packet loss seemed unlikely. However, while voice traffic does receive QoS prioritization, the keepalives are not typically prioritized. This meant it was possible – if somewhat unlikely – that voice packets could be traversing the network fine but their keepalives could be getting dropped by queues that were too busy.

This lead to checking input queues on multiple switches as well as tweaking QoS parameters, however while we did see some queue drops we could not correlate them to phone deregistrations. There were switchports that showed drops but no associated deregistrations, and conversely ports without drops that had experienced deregistrations. Yet again, there was no discernible pattern.

Truth be told we spent a lot of time going in circles. The core switch was looked at thoroughly since it was one of only two points of commonality, and there was a period of time where we discussed the possibility of a bug in IOS-XE, especially since the Supervisor 8E was (is) still a very new platform. Eventually that was ruled out since it appeared the packet loss was happening downstream.

There was also a lot of focus on some old Catalyst 2950s we were still using. While these did not have any phones directly connected to them, they were in some cases in front of switches that did support phones. But issues specific to the 2950s were ruled out – at least at the time – because we had significant phone deregistrations in one location that was not behind a 2950. Seemingly another dead end.

Eventually a second high-level switching tech got involved, and taking nothing away from the other techs that worked with us, sometimes it’s good to get a fresh set of eyes on the problem.

The new tech immediately wanted to investigate spanning tree. Spanning tree is itself a complicated topic and not one I want to explain in depth here, so to keep it simple and provide enough info for the rest of this post to make sense:

- Spanning tree is designed to prevent switching (L2) loops in environments with redundant L2 links

- It does this by first electing a root bridge (switch)

- Once that’s done, every other switch determines its own lowest-cost path back to the root bridge and uses only those links (so, one single link per switch)

- (Almost) any switch port coming up or going down anywhere causes a topology change notification (TCN) to be sent out everywhere

- Those TCNs force switches to flush their ARP cache and reevaluate their path back to the root bridge

- Ports with “portfast” enabled do NOT generate TCNs when going up or down, which is one of the reasons edge ports should have portfast enabled

The real breakthrough was when this tech discovered he was able to cause phones to deregister by generating TCNs, which is easy enough to do by simply bringing a non-portfast port (typically a trunk) up or down. While it didn’t happen with every TCN and the number of phones affected would vary, this now meant we had a reliable means of duplicating and thus testing the problem. And since we now knew it was TCNs causing the deregistrations, this pretty conclusively pointed towards a spanning tree issue.

You can use the show spanning-tree vlan xxx detail command to see when the last TCN occurred and from which port it originated, although older IOS versions may not show as much detail.

In reviewing our spanning tree setup we discovered a few problems. First of all, the root bridge for many of our VLANs was one of our oldest switches, located in a remote location. Like many larger environments, we are running PVST which runs a separate instance of spanning tree for each VLAN, hence the “per VLAN spanning tree” name. A root bridge had not been explicitly configured for some VLANs, including our voice VLANs, and in the absence of explicit configuration the switch with the lowest MAC address (which will tend to be the oldest) becomes the root bridge.

Use show spanning-tree root to show the ID of the root bridge as well as the port through which the switch in question reaches the root bridge.

A misplaced root bridge can be a huge issue if you are running redundant links since it may send traffic through a circuitous path back to the core. In our case we are not running any redundant links so it really wasn’t hurting performance. Nonetheless we addressed this by explicitly setting our core switch as the spanning tree root for all our VLANs.

Spanning-tree vlan 1-4094 priority 0

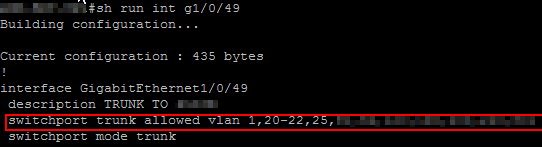

The second issue was we were not pruning our trunks. Every VLAN was allowed on every trunk, which meant a TCN for any VLAN would propagate across every trunk. Essentially then a wireless access point in one building going down would trigger a TCN on a voice VLAN in another building, etc., even though they had nothing to do with each other. To fix this, we manually went through a couple hundred trunks and pruned them appropriately. This helped reduce the number of phones impacted by a given TCN by somewhat localizing the TCN, but did not resolve the problem.

To prune trunks you use the switchport trunk allowed vlan xx,yy command, applied to the trunk interface in question.

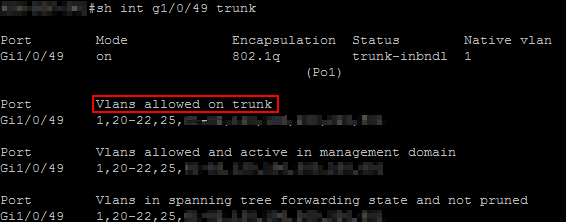

You can then use show interface xxx trunk command to see detailed information about the VLANs allowed on that trunk.

At this point we had corrected the configurations that were not Cisco “best-practices” but were still having problems, which I think surprised us all. Since we could at least reliably generate phone deregistrations, we began packet capturing one link at a time to see where the problem occurred. But before we go any further I need to revisit an earlier topic, specifically the phone to call manager keepalive process.

During our troubleshooting we discovered a bit more about how this actually works, in detail:

- Every 30 seconds, the phone sends a TCP PSH/ACK to the (primary) Call Manager server

- This causes the CM server to respond with a SCCP keepalive packet

- The phone then sends a TCP ACK back to the CM to confirm receipt of the keepalive

(Sorry, I couldn’t find my Wireshark screenshot of this.)

If the phone does not receive packet #2, the phone will disconnect from the CM server and connect to its secondary server.

If Call Manager does not receive packet #3, it appears (although unconfirmed) CM will reset the phone from its end, which forces the phone over to the secondary server. The result is the same (the phone disconnecting) but the difference is which end of the connection causes the disconnect.

The key here is that the keepalive process is actually initiated by the phone, which was the opposite of what we understood when working solely with the voice team. Now that we properly understood the process, it was far easier to interpret the packet captures we performed.

Tracing one specific path, we began capturing at our core 4510R+E and moved outwards, looking for where that PSH/ACK (packet #1 in the three-way exchange above) was being lost. The core 4510R+E never saw it. The 3750 that was next in line and serving as a building head-end distribution switch never saw it. Next up was a 2950, which likewise never saw the PSH/ACK. Finally we had a 3560G to which the phone we were testing was connected. The 3560G did see the PSH/ACK, which initially led us to believe it was dropping the packet. But we needed to pin things down a bit better. We captured the 3560G’s egress to the 2950, as well as the 2950’s ingress from the 3560G and found something extremely interesting. The capture of the 3560G egress showed multiple TCP retransmissions, yet these retransmissions were not showing on the ingress interface of the 2950.

We didn’t pursue things sufficiently to try and conclusively ascertain why exactly this was happening, but we tested the hypothesis that the 2950 was the source of the problem by removing it from this specific path. Sure enough, once it was out of the way of the phones, they stopped having problems.

Let’s back up…as I mentioned earlier, one of the things a TCN does is to force switches to quickly flush their ARP cache. We believe the old 2950s were simply unable to react quickly enough to a TCN to flush their ARP cache, begin to rebuild that cache, and simultaneously forward the PSH/ACK packet from the phones. The downstream 3560G tried to retransmit multiple times, the 2950 still couldn’t keep up, and as mentioned much earlier, there’s only a two second window for all of this to be sorted out, the PSH/ACK packet to be received by the call manager, and the rest of the handshake to take place.

Most of our phones did have 2950s upstream of them somewhere, and conversely the two locations that never had problems did not have 2950s in their path at all, so the 2950s being the source of the problem made sense. Except we had one location that did not have a 2950 in its path…or did it? It took some time for us all to realize, but due to our voice configuration, phone traffic was not switched all the way back to the core. Instead, we have SRST routers at each building; these provide backup analog service to on-site phones if they cannot reach call manager. Our voice traffic consequently flows through these routers and is routed (L3) back to our core. And sure enough, the SRST for the location “without” a 2950 in its path was – wait for it – connected to a 2950. Essentially then every phone in that building had a 2950 in its path back to the core.

We changed where the SRST was connecting into the network and that site immediately stopped having problems.

Now, I wish this story ended here, but alas it does not. At this point we had basically determined why the phones were deregistering, but a question remained: in a steady-state network TCNs should rarely be seen, yet in our case they were occurring frequently. Removing the 2950s eliminated the effects of the stray TCNs but not their cause.

To try and find the source of the TCNs, I enabled spanning-tree debugging on all our switches via the debug spanning-tree events command. This will result in log entries similar to the following:

2015-09-03 06:47:39 Local7.Debug switchname 4730: 004743: Sep 3 06:47:39.345 EDT: STP: VLAN0001 sent Topology Change Notice on Po1

We already had our switches logging to a central syslog server, so by enabling the spanning-tree event debugging, we had plenty of spanning-tree info in a centralized location. Reviewing this data very quickly showed something odd: we had one switch (a 3560G) that was sending near constant TCNs. There was nothing happening on this switch to explain all the TCNs. We rebooted the switch and that stopped the spurious TCNs, so I have to assume there was some sort of strange bug in the version of IOS we were running. (We did a code upgrade afterwards for good measure.)

Despite all this being done we were still seeing a few deregistrations, but now they were isolated to one building. Cisco found an increasing number of CRC errors on one of our fiber trunks. Reseating the SFP and fiber patch cord took care of this last problem and our phones are now working properly again.

This was both an interesting and extremely frustrating problem to work on. My staff and I all learned quite a lot from the Cisco techs as to how to pursue problems like this. Hopefully I’ve shared a little of their wisdom here. We certainly were not expecting multiple problems, and it’s amazing that a bug in one switch generating huge volumes of TCNs exposed a previously unseen performance issue with the 2950s. Ironically we had not been in a rush to replace the remaining 2950s because they were performing just fine. And while we actually don’t have many 2950s left – we’re mostly on 3560G, 2960S, and 2960X models – we are definitely going to replace them as soon as funds allow.

Thanks for reading this far. I hope it’s been helpful. As always, your comments and questions are appreciated.

Thank you for such a clear and well-written account of an adventure in troubleshooting. As a techie, I find this very entertaining.

LikeLike

This helped us immensely. We had a single fiber link in an etherchannel going into err-disabled state causing STP to regenerate about every 5 minutes. I doubt I would have found this issue without reading this article.

LikeLike

Thanks so much, I’m glad it helped!

LikeLike