We have a vSphere 5.1 cluster to which I was recently adding another host. After bringing the host online I started noticing some issues which led me to double-check my entire configuration. In the process I discovered some interesting facts about NIC teaming in vSphere that were news not only to me but also to some other admins I spoke to, and thus this blog post was born.

Fair warning: I am not a vSphere expert and there are far better resources with which you can learn about vSphere networking in general; I’m only going to touch on a few less-obvious items and enough ancillary material for everything to hopefully make sense.

NIC Teaming

To quote vmware themselves (ugh, the inconsistently in capitalization is so annoying), “when two or more [physical NICs] are attached to a single standard [vSwitch], they are transparently teamed.” (Page 15) What’s confusing though is in some cases, teaming isn’t really what you want and additional manual configuration is required. Read on…

When you add a network connection to a vSphere host, there are two options: Virtual Machine or VMKernel.

The best way to configure Virtual Machine networking is going to vary quite a lot from environment to environment, so instead I want to focus on the VMkernel stack. As the GUI shows, VMKernel is actually the parent connection type for vMotion, iSCSI, NFS, and host management. I have no experience with vSphere NFS so enough said on that topic.

Ignoring NFS gives us three VMkernel services for which we typically need to address networking: vMotion, iSCSI, and host management. Based on the various links below and other research I’ve done, it seems the most straight-forward way to set this up is with a separate vSwitch for each, assuming you have enough physical NICs to provide redundancy for each

vSwitch. This means you need at least six physical NICs in addition to those used for Virtual Machine networking.

Let’s look at each service one by one:

Host Management

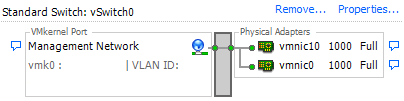

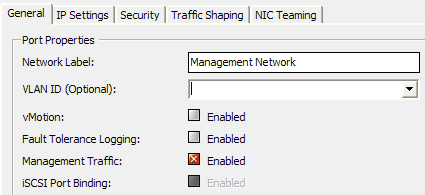

Your host management vSwitch needs a single VMkernel port, with vMotion disabled and Management Traffic enabled. The default NIC Teaming policy should be in effect (i.e. “override switch failover order” should be disabled) and all adapters are “active.” This is the easy service as there really isn’t any special configuration required.

vMotion

What’s surprising here is that despite multiple NICs being transparently teamed, there is a specific configuration necessary to realize a performance benefit from multiple NICs. I’m not going to rehash content that others have already written, so check out Duncan Epping’s post here, which led to a vmware KB article explaining this process. In short, you need multiple VMkernel’s, setup so that each uses a separate active NIC. This allows vSphere to use multiple NICs for vMotion.

iSCSI

This is a similar situation to vMotion in that an explicit configuration is required to realize the benefits of multiple NICs. In this case, you’ll need to setup multipathing with port binding even if you’re using a single storage array and all your iSCSI traffic goes through a single switch.

Start with vmware’s “Considerations for using software iSCSI port binding in ESX/ESXi” to figure out if you should be using port binding and if your network configuration supports it. “Multipathing Configuration for Software iSCSI Using Port Binding” is then the actual configuration guide. Both links are reasonably concise and easy to follow.

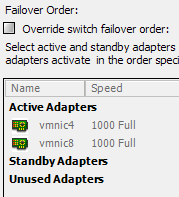

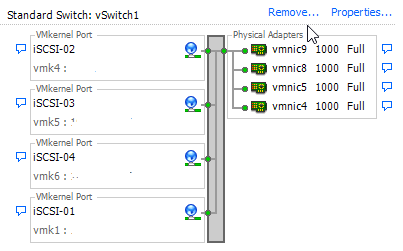

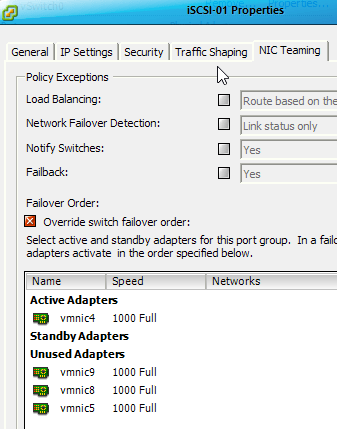

Essentially you will need a VMkernel port for each NIC, with its own IP address that resides in the same broadcast domain as the other NICs. Each VMkernel port should have only one active NIC (but a different active one from the other ports) with the remaining NICs being unused.

Below, note how each VMKernel port has a different active NIC, and the others are unused. (I’m only showing two of the four I have configured, but you get the idea.)

In Summary

One of the nice things about vSphere networking is the basic setup is pretty easy, and things will work reasonably well. It does take a little extra one-time effort to implement the setup above, but the performance and reliability improvements are worthwhile.

Thanks for reading!